Using Speechly CLI

Speechly's Command Line Interface (CLI) lets you interact with Speechly from the comfort of your terminal. Use Speechly CLI to manage your projects and applications, deploy new versions, download configurations, evaluate accuracy and more.

Installation

- MacOS

- Windows

- Linux

Use Homebrew package manager:

brew tap speechly/tap

brew install speechly

Updating Speechly CLI:

scoop upgrade speechly

Use Scoop package manager:

scoop bucket add speechly https://github.com/speechly/scoop-bucket

scoop install speechly

Updating Speechly CLI:

scoop update speechly

For Linux, we provide a pre-compiled binary releases, see GitHub Releases. Also a Docker image is built and published.

Authentication

Running any speechly command for the first time will give you an error saying Please add a project first and this is totally normal. Speechly CLI uses API tokens to access to existing projects and by default no projects have been added.

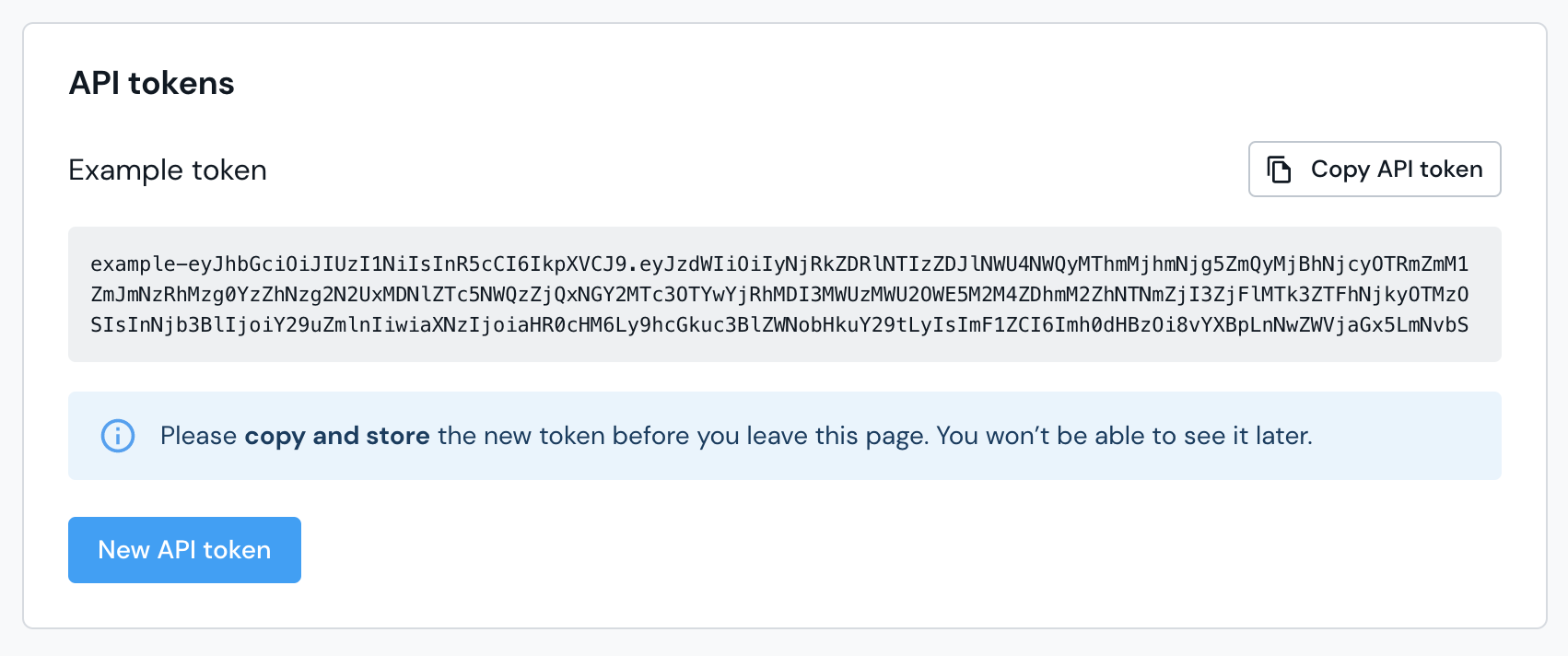

Create an API token

- Log in to Speechly Dashboard

- From the Projects menu, select the project for which you want to create the token for

- Go to Project Settings → API tokens and create a new token

- Copy and store the token, you'll need it soon

Adding your project

Use the following to command to add your project:

speechly projects add --apikey YOUR_API_TOKEN

# Alternatively, you can set the API token as an environment variable SPEECHLY_APIKEY

Now you are ready to start using the Speech CLI. To see all applications in this project, run the command:

speechly list

API tokens are project specific, if you need to access multiple projects using Speechly CLI, you must create a separate API token for each project. See Managing multiple projects to learn more.

Updating an API token

Updating an API token is done by modifying the Speechly CLI settings file. You can see where the settings file is located by running:

speechly projects

Settings file used: /path/to/home/.speechly.yaml

Open the .speechly.yaml file in a text editor. You should see something like this:

contexts:

- name: My Project

host: api.speechly.com

apikey: YOUR_API_TOKEN

remotename: My Project

current-context: My Project

Generate a new token and replace the old one with it. Ensure that you are viewing the correct project in Speechly Dashboard before generating the token.

Usage

After authenticating Speechly CLI, you can get a list of commands by running:

speechly

To get a list of available sub-commands, arguments & flags run:

speechly [command]

Speechly CLI follows an approach similar to git or docker, where different functionalities of the tool are accessed by specifying a command followed by arguments to this command.

Speechly CLI workflow

Working with Speechly CLI and a code editor.

Speechly and Git

Version controlling your application configuration using git (or similar) is a great way to keep track of changes. If you use GitHub (or similar) to store your code, remember that pushing changes doesn't trigger a deployment for your Speechly application. For this you'll use the deploy command.

The only real difference is that you will most likely use git pull to fetch the newest code, including your Speechly configuration. When not working with git or if your Speechly config is outside of it, you are most likely to use the speechly download command for this downloading the latest config.

Example project structure

We recommend keeping creating a separate directory for your applications configuration.

my-app/

├─ node_modules/

├─ public/

│ ├─ favicon.ico

│ ├─ index.html

│ ├─ robots.txt

├─ src/

│ ├─ index.css

│ ├─ index.js

├─ config/

│ ├─ config.yaml

│ ├─ import.csv

├─ .gitignore

├─ package.json

├─ README.md

Downloading an application

To download a local copy of a deployed application configuration, you can use the following command:

speechly download YOUR-APP-ID config/

Deploying an application

Once you're happy with your changes, you can deploy them by using the command:

speechly deploy YOUR-APP-ID config/

Speechly CLI builds and uploads a deployment package that contains all files in the specified directory. It is thus highly recommended to store there only files that are relevant to the configuration. In the simplest case this is only the config.yaml.

Listing applications

Your project may contain multiple applications, to list all applications in the current project run:

speechly list

This is useful for checking the delpoyment status of your application or for copying App IDs when working with separate Production and development applications.

Accuracy evaluation

Speechly CLI supports evaluating ASR and NLU accuracy of your application. This helps you understand how accurate your application is at transcribing audio and at detecting entities and intents correctly.

You evaluate ASR and NLU accuracy by running:

# asr

speechly evaluate asr YOUR-APP-ID ground-truths.jsonl

# nlu

speechly evaluate nlu YOUR-APP-ID ground-truths.txt

See Evaluate ASR accuracy and Evaluate NLU accuracy to learn more.

Usage in automation

Fully automated usage is easily possible, you need only the API token and the App ID. As the cli is also published to the Docker hub, it can be added to all tools supporting docker images as run steps.

Basic example with sh, mounting the current directory as the working directory:

export SPEECHLY_APIKEY=YOUR_API_TOKEN

export APP_ID=YOUR-APP-ID

# validate app:

docker run -it --rm -e SPEECHLY_APIKEY -v $(pwd):$(pwd) -w $(pwd) speechly/cli validate -a ${APP_ID} config-dir

# deploy app:

docker run -it --rm -e SPEECHLY_APIKEY -v $(pwd):$(pwd) -w $(pwd) speechly/cli deploy -a ${APP_ID} config-dir -w

Github Actions

The configuration validation and deployment tasks can be set up as separate workflows in Github Actions. The following examples expect the App ID and API token to be set up as repository secrets. They also expect the configuration file(s) to be located in configuration-directory in the root of the repository.

Configuration validation

name: validate Speechly config

on:

pull_request:

branches:

- master

paths:

- "configuration-directory/**"

jobs:

validate:

runs-on: ubuntu-latest

steps:

- uses: actions/checkout@v2

- uses: docker://speechly/cli:latest

with:

args: validate -a ${{ secrets.APPID }} configuration-directory

env:

SPEECHLY_APIKEY: ${{ secrets.SPEECHLY_APIKEY }}

Configuration deployment

name: deploy Speechly config

on:

push:

branches:

- master

paths:

- "configuration-directory/**"

jobs:

deploy:

runs-on: ubuntu-latest

steps:

- uses: actions/checkout@v2

- uses: docker://speechly/cli:latest

with:

args: deploy -a ${{ secrets.APPID }} configuration-directory -w

env:

SPEECHLY_APIKEY: ${{ secrets.SPEECHLY_APIKEY }}

Autocompletion

Speechly CLI provides a command for generating an autocompletion script for zsh, sh, fish and powershell. We'll cover installation on zsh, since it's the Mac OS default shell nowadays. Your installation process may vary if you're using a different shell.

First save the autocomplete script to a file:

speechly completion zsh > _speechly

Then move it to a folder in your $fpath:

mv _speechly /usr/local/share/zsh/site-functions

Installing in your home directory

If you don't have permissions to write /usr/local and don't want to change that, you can move the autocompletion script to your home directory instead:

mkdir ~/.completions

mv _speechly ~/.completions

Remember to add the new directory to your $fpath in ~/.zshrc:

fpath=( ~/.completions "${fpath[@]}" )

Reload the shell:

source ~/.zshrc

Enjoy the productivity boost provided by autocompletion!